Hey Plex

More Potentials. Fewer steps. A calmer phone experience.

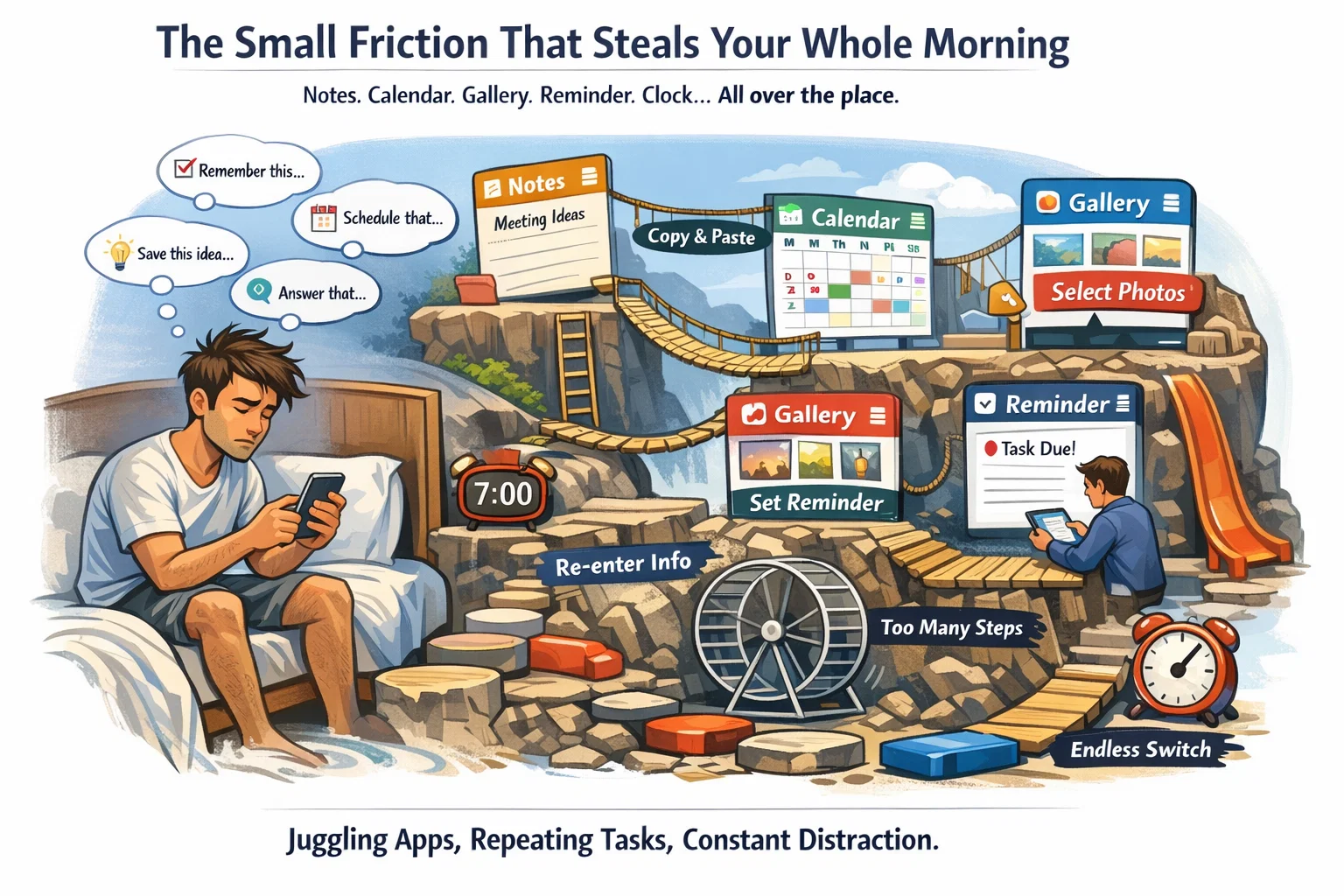

The small friction that steals your whole morning

You wake up with good intentions. Not “change my life” intentions. Just the simple kind: get through the day without feeling like your phone is running you.

You open your screen and think: I need to remember this, schedule that, save this idea, pick the best photos, answer that message. That should be easy. Instead, it becomes a mini obstacle course. Notes, Calendar, Gallery, Reminder, Clock — different islands, different rules. You copy and paste your own thoughts across apps like you’re doing admin work for your own life.

This is the part people rarely say out loud: the modern smartphone is powerful, but it’s also noisy. It demands attention. And most “AI features” — as impressive as they may look in demos — still leave you doing the hard part: switching, managing, stitching.

Samsung’s new push for Galaxy AI is built around a deceptively simple promise: reduce effort and steps across everyday tasks.

Not by adding more buttons, but by making the phone understand what you’re trying to do — and helping you get there with fewer interruptions.

And the way Samsung says it will do this is a strategic shift the industry is quietly moving toward: multi-agent AI.

The industry confession

People don’t use one AI anymore

There’s a reason this shift is happening now. People are no longer faithful to a single assistant. They’re situational. One AI is good for writing. Another for discovery. Another for voice. Another feels more trustworthy, depending on the topic.

Samsung cites internal research showing this behavior is becoming the norm: nearly 8 in 10 users rely on more than two types of AI agents, choosing based on the task as AI becomes embedded in daily routines.

That’s the market reality: the future isn’t “one assistant wins.” The future is “your device helps you choose — without making your life complicated.”

So Samsung is evolving Galaxy AI to support a choice of integrated agents, so users can pick what best fits their needs, preferences, and routines.

Samsung’s big bet

The OS becomes the stage, not the apps

Here’s the part that matters most — and it’s technical, but it changes everything.

Samsung describes Galaxy AI as being built to bring meaningful AI directly into the operating system through deep, framework-level connections across the device. Rather than operating inside individual apps, Galaxy AI works at the system level, understanding context and supporting more natural interactions.

If that sounds abstract, translate it into human experience:

-

less bouncing between apps

-

fewer repeated commands

-

more “flow” across tasks

-

AI that works in the background instead of demanding you manage it like a tool

Samsung also explicitly says this system-level approach lets it curate experiences from supporting services — such as Perplexity — while keeping everything seamlessly integrated inside the Galaxy environment.

This isn’t just “add AI.” This is “reshape the architecture of everyday interaction.

Leadership & strategy

Why “orchestration” is the keyword

This announcement isn’t framed as a feature drop. It’s framed as ecosystem direction.

Samsung’s Won-Joon Choi (President, COO, and Head of the R&D Office, Mobile eXperience Business) emphasizes a commitment to an open, inclusive, integrated AI ecosystem built to give users more choice, flexibility, and control — especially for complex tasks.

Then he uses the word that reveals the strategy:

“Galaxy AI acts as an orchestrator.”

Orchestrator is not marketing fluff. It’s a product philosophy: different AI forms can exist, but the user shouldn’t feel the seams. The phone should feel like a single coherent experience — calm, cohesive, and natural.

Perplexity

An additional Galaxy AI agent

Samsung says that as part of this multi-agent expansion, it will introduce Perplexity as an additional AI agent on upcoming flagship Galaxy devices.

Access is designed to be frictionless:

-

a dedicated voice wake phrase: “Hey, Plex.”

-

quick access controls, such as pressing and holding the side button

But the bigger claim is integration. Samsung says Perplexity will be deeply embedded across select Samsung apps, including:

-

Samsung Notes

-

Clock

-

Gallery

-

Reminder

-

Calendar

…and select third-party apps (details to come).

And the point of that embedding is not novelty. Its workflow: smoother multi-step tasks, moving between actions without manually managing apps.

The guide

That “You’ll feel it.”

Let’s drop the hype and make it tangible. If Samsung executes this well, the experience should feel like:

From thought → plan, without the messy middle

You say: “Remind me to send the proposal tomorrow morning.”

The phone understands: you’re referring to a task, time, and urgency — and it turns into an actionable reminder and/or calendar block without you opening multiple apps.

Notes that don’t just sit there

You capture a meeting note. The AI helps you:

-

Extract action items

-

create reminders

-

schedule follow-ups

-

and keep it connected to the original note for context

This is why Notes + Calendar + Reminder being part of the integration list matters.

A gallery that behaves like a curator

“Find the best photos from Friday, not the blurry ones.”

Instead of scrolling through 120 near-duplicates, you get a shortlist you can actually use.

Clock that understands you have a life

Clock integration hints at routines that adapt: timers, focus blocks, sleep cues, morning schedules — the parts of daily life that should feel supportive, not manual.

Key Highlights

Samsung is building Galaxy AI around reducing effort and steps in daily tasks.

Users increasingly use multiple AI agents; Samsung cites nearly 8 in 10 relying on more than two.

Galaxy AI is positioned as OS-level intelligence via deep framework connections.

Samsung calls Galaxy AI an “orchestrator” for a cohesive multi-agent experience.

Perplexity is coming as an additional AI agent with “Hey Plex” + side-button access.

Perplexity is described as embedded across Notes, Clock, Gallery, Reminder, Calendar (and some third-party apps).

Details about supported devices/experiences will be announced soon.

Decision Lenses Comparison

Lens 1: Freedom vs. Familiarity

-

Samsung’s direction: freedom of choice inside an integrated ecosystem — multi-agent by design.

-

Single-assistant ecosystems: familiarity and consistency — one “lane,” fewer decisions.

Who this fits:

If you like tailoring tools to tasks, Samsung’s approach aligns with you. If you want one consistent flow with fewer options to manage, single-assistant ecosystems can feel calmer — as long as the one assistant meets your needs.

Lens 2: Where AI lives — app layer or OS layer

-

Samsung’s claim: Galaxy AI lives at the system level, built into the OS through deep connections.

-

Many AI implementations today still feel like “an app you open” or a feature silo.

Who this fits:

If you hate switching apps and repeating yourself, OS-level AI is the only approach that can truly reduce friction — when implemented well.

Lens 3: The everyday win — answers vs. actions

Some AI is excellent at answering.

Samsung’s pitch is about actions: multi-step workflows that move between apps smoothly.

Who this fits:

If your life is meetings, reminders, notes, photos, and schedules — action-based AI matters more than clever chat.

Lens 4: Lock-in vs. openness

Samsung explicitly emphasizes an open and inclusive ecosystem with more choice and control.

Who this fits:

If you don’t want your AI future decided by one vendor, openness is not ideology — it’s practical power.

Your Phone Shouldn’t Feel Like a To-Do List

This move reflects a broader shift: the world is moving from “AI as a feature” to “AI as a layer.”

Multi-agent is becoming normal, because humans are multi-contextual.

OS-level integration becomes a competitive battleground.

Trust and control are becoming purchase factors, not afterthoughts.

This announcement also has a clear regional spotlight: Samsung’s press release is datelined Dubai, United Arab Emirates – Feb. 24, 2026, signaling how important the Middle East has become as an innovation showcase and premium device market.

FAQ

Q1: What is Samsung’s multi-agent Galaxy AI ecosystem?

It’s Samsung’s expansion of Galaxy AI to support a choice of integrated AI agents, reflecting user behavior where people use multiple AI tools depending on the task.

Q2: Is Perplexity coming to Samsung Galaxy phones?

Samsung says it will introduce Perplexity as an additional AI agent on upcoming flagship Galaxy devices.

Q3: How do users access Perplexity on Galaxy?

Samsung states users can access it via the “Hey Plex” wake phrase or quick controls such as pressing and holding the side button.

Q4: Which Samsung apps will Perplexity be integrated into?

Samsung lists Samsung Notes, Clock, Gallery, Reminder, and Calendar, plus select third-party apps.

Q5: What does Samsung mean by Galaxy AI working at the system level?

Samsung says Galaxy AI is built into the operating system through deep connections, using context to support more natural interactions and reduce app switching and repeated commands.

Q6: Will Galaxy AI features be available everywhere?

Samsung notes availability may vary by region/country, OS/One UI version, device model, and carrier, and some AI features may require a Samsung Account login.

Q7: When will Samsung share device support details?

Samsung says additional details about supported devices and experiences will be announced soon.

Galaxy AI

A calmer kind of intelligence

A lot of AI marketing is loud. It promises brilliance, disruption, transformation — and then gives you another interface to manage.

Samsung’s multi-agent direction is interesting because it’s aiming for something quieter: less effort, fewer steps, more natural flow.

If you zoom out, Samsung isn’t just adding another assistant. It’s making a statement about the next phase of AI: the era of the AI “monopoly” is ending.

People don’t want to be told which assistant to trust, which agent to use, or which personality to adopt. They want outcomes. They want less friction. They want that rare feeling that technology is helping rather than demanding attention.

A well-built multi-agent ecosystem can do something quietly revolutionary: it can let each AI specialize—research, planning, creativity, execution—while the phone itself becomes the calm, cohesive bridge between them.

If Galaxy AI truly becomes what Samsung describes — OS-level intelligence that understands context, works in the background, and orchestrates multiple agents into one cohesive experience — then the most meaningful change won’t be what you can ask your phone.

It’ll be what you no longer have to do.

Less app hopping. Less repeating yourself. Less friction between intention and action. And more of that rare feeling that your device is finally adapting to you — not the other way around.