Samsung × OpenAI

When AI Becomes a Supply Chain

Artificial intelligence is no longer just a collection of digital models floating through the cloud. It has become something tangible—a chain of physical decisions about silicon chips, packaging, server racks, power consumption, water usage, and geography. The Samsung–OpenAI partnership transforms this understanding into action: a letter of intent that brings together four Samsung divisions to build an AI infrastructure designed as a single product, from chip to deployment.

It starts with the hardest truth: models don’t run on compute alone. They run on memory, interconnects, and power—and on the logistics that make these three work in harmony.

For years, artificial intelligence felt like a cloud-based abstraction: invisible models, polished APIs, and stage demos that slowed to a crawl on launch night. The Samsung × OpenAI collaboration turns AI into a deliberately sequenced supply chain: DRAM capacity measured in wafers per month, advanced packaging that shortens the distance between memory and compute, data centers managed as engineered products with clear owners and performance metrics, and even strategic siting—placing capacity where it keeps experiences fast when everyone shows up at once.

This isn’t a marketing campaign. It’s a letter of intent signed in Seoul on October , 2025, between Samsung Electronics, Samsung SDS, Samsung C&T, Samsung Heavy Industries, and OpenAI—to accelerate AI infrastructure globally and co-develop the technologies that will power what comes next.

Samsung × OpenAI partnership

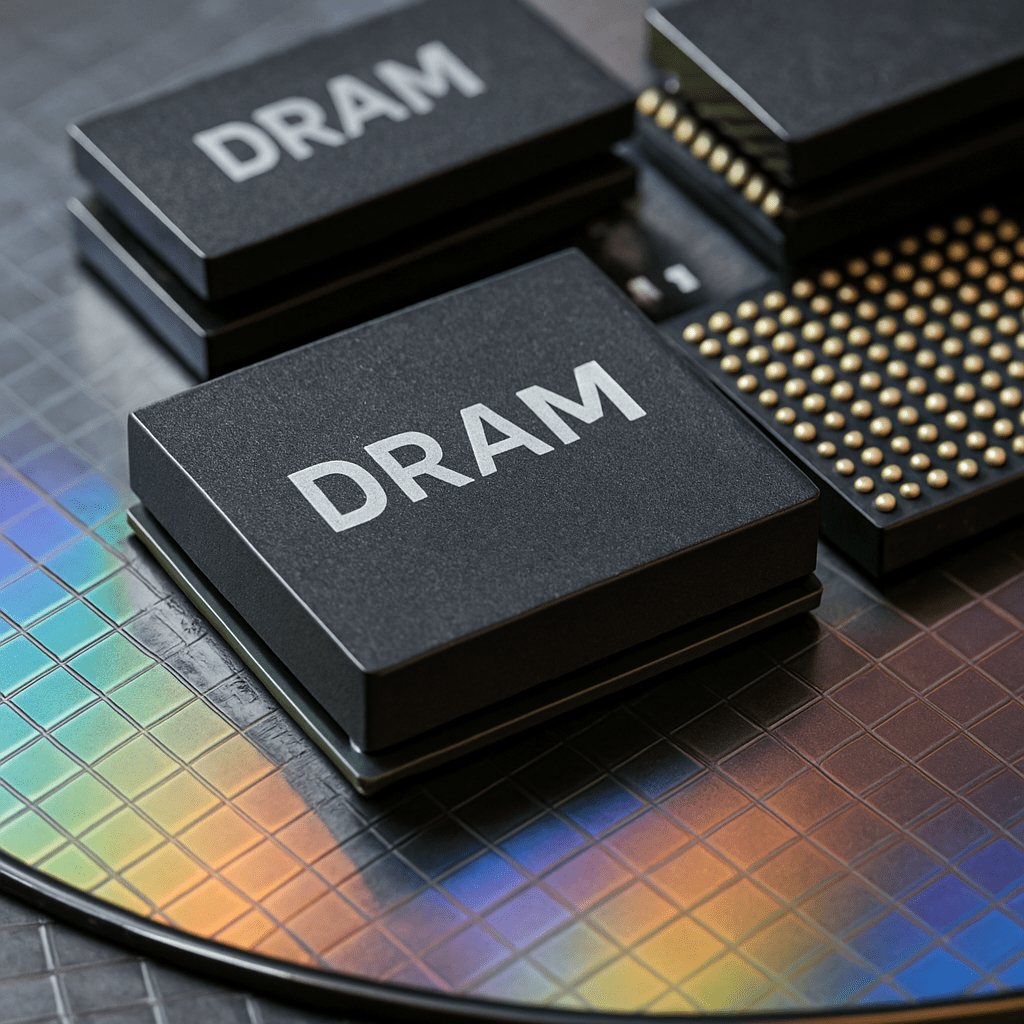

The problem isn’t just compute. It’s memory, and proximity. As the strategic memory partner for the Stargate initiative, Samsung Electronics is planning to meet demand that could reach 900,000 DRAM wafers per month, with advanced chip packaging and heterogeneous integration to reduce the physical distance between model and memory—meaning lower latency and higher stability when demand spikes.

And capacity must be managed. Samsung SDS treats AI data centers as a single product—from design through development to operations—and becomes an OpenAI reseller in Korea (including ChatGPT Enterprise), making enterprise adoption smoother with local support and compliance. When the path is clear for institutions, end users feel a calmer, faster experience.

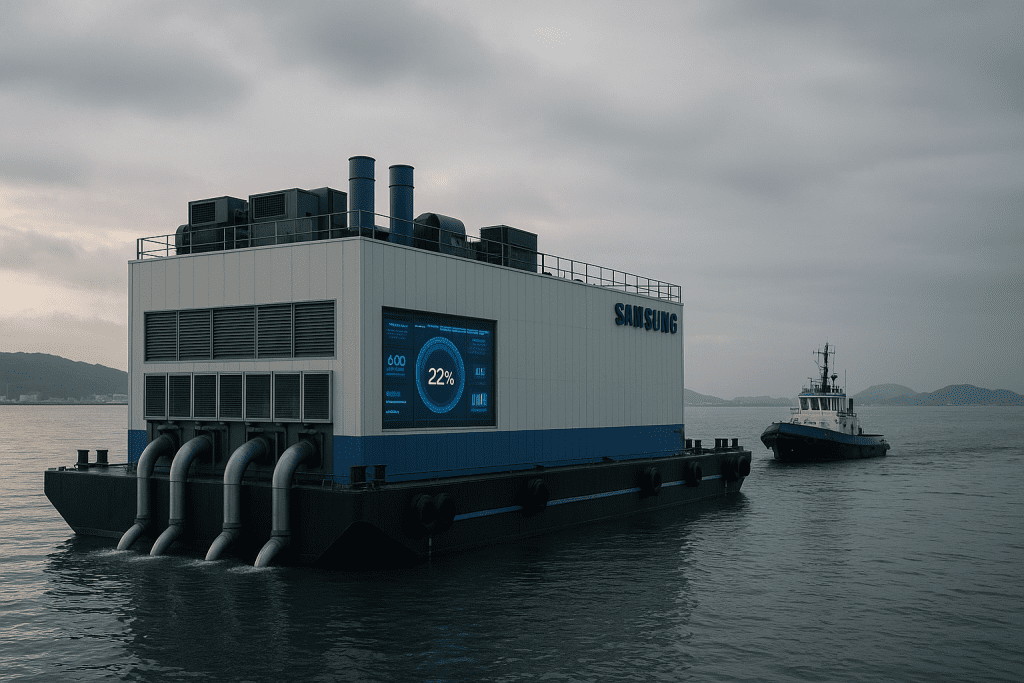

Location is part of the design. Samsung C&T and Samsung Heavy Industries are developing floating data centers—offshore infrastructure that eases land pressure, improves cooling economics, and opens pathways to lower carbon over time. Floating power plants and control centers are also being explored. Intelligence that anchors near demand.

At the silicon layer, Samsung Electronics arrives as OpenAI’s strategic memory partner for Stargate, envisioning demand that could reach 900,000 DRAM wafers monthly—a figure revealing that memory proximity to compute is the real currency of scale, with advanced packaging and heterogeneous integration bringing memory closer to logic.

At the infrastructure layer, Samsung SDS treats AI data centers as an engineered product—from design through deployment to operations—and opens a practical adoption path for Korean enterprises by reselling OpenAI services (including ChatGPT Enterprise) with local support and compliance.

At the geography layer, Samsung C&T and Samsung Heavy Industries are studying the eternal bottleneck: land. The solution is both bold and practical—floating data centers near water to lower cooling costs and ease land pressure, with prospects for floating power plants and control centers. Intelligence that docks where demand lives.

This is the shift from “AI as software” to AI as supply chain—from chip to watt, from port to packet—backed by a national ambition to place Korea among the world’s top three in artificial intelligence.

Why This Matters Today

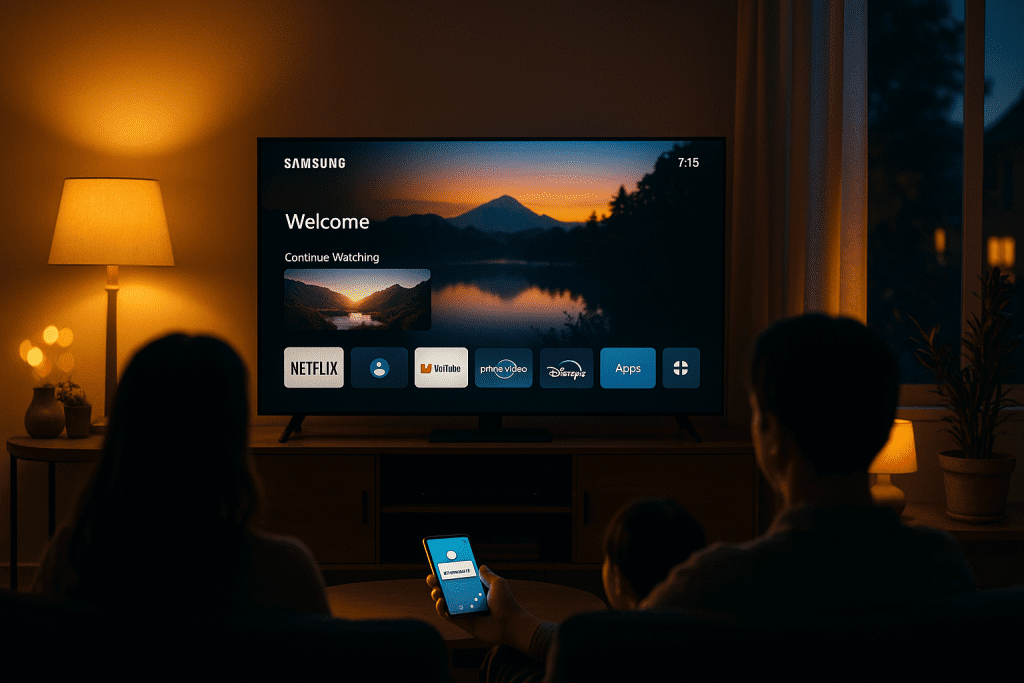

This partnership doesn’t promise you flashy headlines, but something more important: services that work reliably. Fast response during peak hours, recommendations that don’t collapse on launch night, and translation that runs at street speed, not network crawl. The reason is simple: memory, packaging, location, and operations are now designed as one system, not scattered pieces. The result? Lower latency, higher sustainability, and reliability you feel in your phone, TV, home, and workplace.

What’s New — From Vendor List to Operating Plan

Memory Sets the Pace

The question is no longer “how much compute do we have?” but “how do we feed the model its context at the right time?” So DRAM is treated as the pacing item: capacity measured in wafers per month, advanced packaging that shortens the physical distance between memory and compute, and heterogeneous integration that reduces travel time inside the chip itself. This is the difference between a feature that works in the lab and a feature that survives launch night.

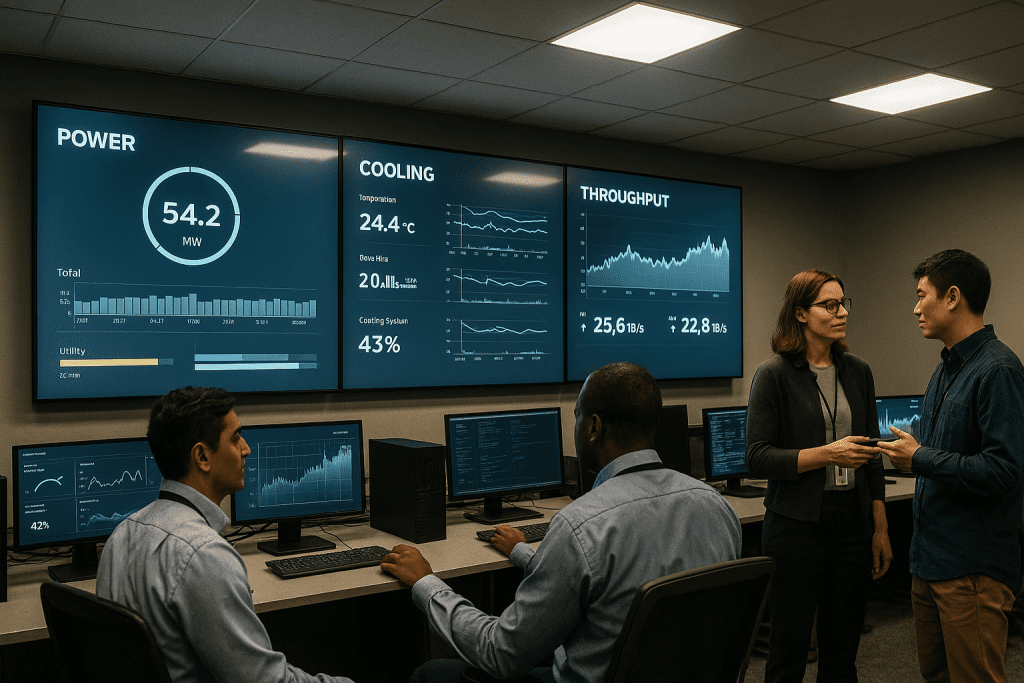

Centers as a Product with Clear Ownership

When design, development, and operations fall under a single owner, centers are managed by tangible metrics: throughput, SLOs, cost-per-token, and energy per inference. When centers are managed with this mindset, failures drop and upgrades become quiet events, not service storms.

Samsung × OpenAI, Reliability You Can Feel

Smart Siting, Sometimes on Water

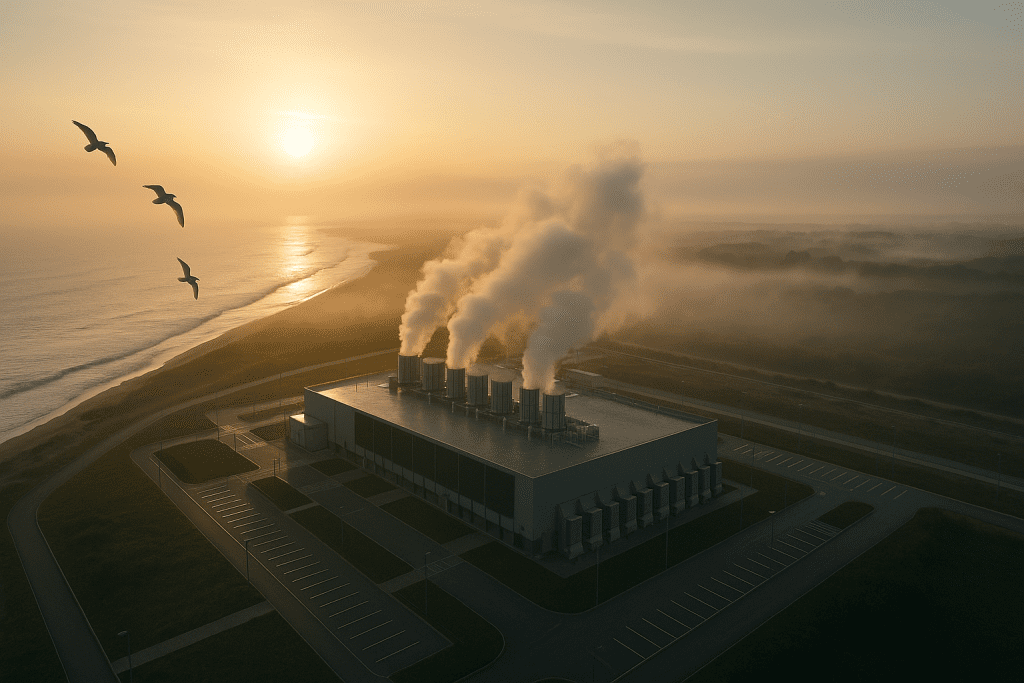

Floating data centers aren’t CGI renders; they’re an engineering solution that places computing capacity near water for better cooling and more stable operating economics, relieves land pressure in coastal cities, and opens the door to lower carbon emissions through floating power plants later. Capacity that anchors where demand exists, rather than being dragged from distant locations.

Who Does What — Clear Role Distribution

Samsung Electronics: The heart of memory and chips. DRAM production at global scale potentially reaching 900,000 wafers per month, with advanced packaging and heterogeneous integration that bring memory literally closer to the compute unit.

Samsung SDS: The operational owner of the design-development-operations chain, and the gateway for enterprise adoption in Korea through its role as an OpenAI reseller (including ChatGPT Enterprise); meaning capacity that can be operated with local compliance and support.

Samsung C&T: Engineering of floating data centers, site planning, and exploration of floating power plants and control centers as a scalable control and power layer.

Samsung Heavy Industries: Maritime expertise and platform manufacturing that turns concept into floating structures operating on realistic maintenance schedules and costs.

Platform and Engineering — From Wafer to Watt

Silicon and Memory

Imagine a highway for data: widening it means producing more wafers per month, bringing exits and entrances closer means advanced packaging, and removing slowdown points inside the node means heterogeneous integration. The result: higher effective bandwidth, lower latency, and cleaner energy per processing unit.

Facilities and Operations

The center isn’t just a room filled with server racks; it’s a product designed for real workloads and managed with a unified operating manual. When Samsung SDS speaks the language of site reliability engineering and service-level objectives, quality becomes a measurable promise, not just a slogan: upgrades without downtime, load distribution that accounts for peaks, and performance protection that doesn’t suddenly collapse.

Siting and Sustainability

The question is no longer “which plot of land?” but “which coastline?” Water isn’t scenery; it’s a cooling component. Floating data centers change the equation: better power usage effectiveness, cheaper and cleaner cooling, and floating power options later for generation closer to the point of need. This isn’t an environmental detail; it’s lower operating cost and higher reliability.

Geographic Context — From Seoul to Crowded Coastlines

The letter of intent was signed in Seoul, where semiconductor expertise intersects with advanced infrastructure. But the impact of maritime engineering extends beyond Korea: crowded coastal cities around the world—hot and land-scarce—need computing capacity that doesn’t compete with housing and industry for land. There, floating centers aren’t a “nice” option but a necessary path to expand capacity while preserving cooling economics.

How the Impact Shows in Your Life

Your Phone (Galaxy)

Translation and summarization arrive on time even on a crowded morning train. Camera semantics understand the scene and process optical noise without stuttering on the night of a major update—because the memory pipeline is designed for peak moments, not average usage.

Your TV (Samsung TV)

Recommendations and upscaling with low response time during prime viewing hours, with quiet quality adjustment instead of sudden drops when requests pile up.

Your Home (SmartThings)

Energy routines that automatically reschedule devices toward off-peak hours, early fault alerts (washing machine vibration, abnormal refrigerator temperature), and smart mixing between local inference and cloud where privacy and speed both benefit.

Your Work and Study (Korea First)

Faster adoption through the authorized reseller path (including ChatGPT Enterprise): enterprise experiences without the headache of exhausting integration, and with local compliance, reflected in stability across your daily tools.

Practical Sustainability

Coastal siting reduces cooling energy; floating power plants later open cleaner energy markets—this isn’t environmental decoration, but real reduction in long-term service cost.

Quick Comparison — Why This Approach Differs

From memory in the back seat to memory in the front seat: From treating DRAM as a detail to making it the element that sets the pace of expansion.

From centers “managed however” to centers as product: Single owner of outcomes, measurable service agreements, and drama-free upgrades.

From purely land-based campuses to floating capacity: Improved cooling economics, less land pressure, and deployment flexibility where demand actually forms.

Direct Questions with Brief Answers

Are we looking at a final contract?

It’s a letter of intent—a roadmap for phased execution: DRAM expansion, advanced packaging, and operation of engineered AI centers, with exploration of floating data centers.

What’s the benefit of “up to 900,000 DRAM wafers per month”?

It’s literally widening the road: higher bandwidth, lower latency, and ability to absorb peak demand without a bottleneck in memory.

Why were terms like advanced packaging and heterogeneous integration left in English?

Because they carry more precise engineering meaning than literal translations; keeping them avoids ambiguity that would harm understanding.

Are floating centers just a marketing idea?

No. It’s a siting approach that improves power usage effectiveness, reduces cooling costs, and opens lower-emission pathways—backed by maritime and engineering expertise from an industry that knows how to work on water, not just talk about it.

How does SDS’s role impact me as a user?

When centers are managed with clear ownership from design to operations, updates become faster and quieter, and reliability becomes habit, not accident.

Quiet Technology Wins in the End

Great technology isn’t the kind that makes noise, but the kind that reduces the noise in your day. For AI to shift from promise to habit means designing memory, packaging, location, and operations as one orchestra: context reaching compute at the right time, centers that breathe with the load, and location that lowers cooling cost and supports cleaner power.

The strength of this approach is that it’s both physical and human: from wafer chip to living room, from port to data packet. And when these layers integrate, you don’t need to notice the intelligence to appreciate it; you’ll notice the absence of annoyance: less waiting, fewer interruptions, batteries that last longer, and an experience that doesn’t stutter when everyone crowds in at once.

If the last decade made AI available, the next decade will make it available on time—and that’s the real luxury in a fast world that waits for no one.

DRAM wafers per month, heterogeneous integration, near-edge AI, low-latency inference, PUE improvement, coastal data center siting, reliability for AI services, energy-aware AI, sustainable AI infrastructure, enterprise AI in Korea